Research led by Trinity Hall’s Professor Hatice Gunes has revealed that robots can be better at detecting mental wellbeing issues in children than parent-reported or self-reported testing. We spoke to her about her work and the future of robots in society.

What is your research?

My research interests are in the areas of affective computing and social signal processing that lie at the crossroad of multiple disciplines including, computer vision, signal processing, machine learning, multimodal interaction and human-robot interaction.

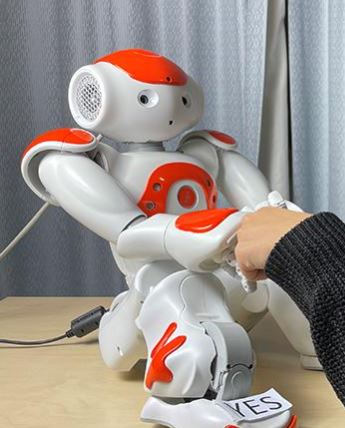

My current research vision is to create autonomous systems and social robots with embodied artificial socio-emotional intelligence that can engage people in long-term interactions through adaption and personalization.

Essentially this means developing algorithms to sense humans, and their nonverbal behaviours such as their facial and bodily expressions and interpret these on the machine side to then generate appropriate and meaningful machine behaviour.

I am particularly interested in using such technology to improve the wellbeing of people and to help make people more resilient to life’s challenges.

Is there a paradox in using “unemotional” AI to help humans in their wellbeing?

When people see professionals such as coaches, what matters is how these people express nonverbally that they are listening to us, that they empathise with us somehow, and this is usually achieved through active listening and back-channelling – ie prompts such as “I see”, “all right,” “go on,” or “uh-huh” and/or nodding the head etc. These indicate that the professional has heard what the patient has said and is interested in hearing the full story.

Humanoid (human looking) and social robots, with their varied form and size, can emulate such behaviours and therefore are arguably the most “emotional” AI that there currently is. There are of course virtual conversational agents, in the form of mobile applications and chat-bots. However, most of these agents are not interactive and even when they are, they rely on text-based and non-adaptive communication considering very little human feedback. A robot instead is embodied, has a physical body and presence, and can be designed to adapt to its users, and this really helps with engaging people.

Is there a difference with the way different age-groups interact with robots?

The usage and the need for a robot very much depends on the application and the context. For example, they could be used for robot-enhanced therapy for children with autism spectrum disorders alongside the experts, or in care homes to physically/mentally engage the elderly for therapeutic purposes, in addition to the regular support they would get. Or they could be used as tele-operated entities to provide physical access to humans when they cannot be physically in a certain location or space (eg during the pandemic).

So, yes, there would be a difference with the way different age-groups and different user groups interact with robots depending on their specific needs: for instance a user group with special needs versus using robots as museum guides.

What does being a fellow of Trinity Hall mean to you and does it help or influence your research or teaching in any way?

Becoming a fellow of Trinity Hall and taking part in college activities as a Director of Studies for Computer Science students has enabled me to finally understand the multi-faceted Cambridge collegial life and its purpose and its practical function. I enjoy connecting with students from the beginning, as we interview them during their application process and then seeing them evolve and develop throughout their studies both in College and the Department. I enjoy small group supervisions as it gives me a chance to see how it makes a difference in their learning and understanding. Also being part of committees, for example the Junior Disciplinary Committee, makes me appreciate all the hard work different members of staff put in and the challenges they face, particularly in challenging times such as the pandemic. I believe the whole process has helped me become a better and a more rounded academic, by connecting with different people from all walks of life and feel part of a living community.

Will we ever be replaced by robots?

The availability of commercial robotic platforms and developments in collaborative academic research provide us a positive outlook, but the capabilities of current social robots are quite limited. Some major limitations are related to the physical capabilities of these robots: battery life is short (they easily get heated-up and cannot run for more than a certain number of hours); the hardware is not sufficient to run in real-time complex computer vision and machine learning algorithms on the robots; and usually additional computers or cloud services are needed. Also, the commercial robots do not adapt, they always provide the same response / reaction to a particular human action which can negatively impact uptake once the novelty effect wears off. So, with these in mind I would say that robots will not be able to replace us humans any time soon. It is more promising to use robots for more mechanical jobs such as in agri-food sector, for example: for picking vegetables etc.

Do you think robots will become a commonplace machine: something we’ll see around College everyday?

See above – we can perhaps use them to welcome guests at the Porter’s Lodge or give a guided tour around the college with historical information etc. – for very well-defined activities. But in order to use them for more complex tasks, there is still a lot of future work that needs to be done in this area.

What would you tell a 16-year-old hoping to get into your area of research?

I have already been contacted by curious 16-year-olds. One of them in fact came all the way to the Department to talk to me about my research.

I usually tell them to be curious, to attend science festivals and see things for themselves in action, ask questions, talk to the scientist themselves, attend such events and even science exhibitions like ‘the 500-year history of robots’. These are much more informative than seeing science-fiction movies and series which are a bit disconnected from reality and create wrong expectations in young people. I also tell them that nowadays computer science is incredibly versatile, eg: medical imaging, quantum computing, mobile computing, affective computing, social robotics etc, and therefore, they should keep an open mind about it.

More information

Professor Hatice Gunes is a Professor of Affective Intelligence and Robotics at University of Cambridge’s Department of Computer Science and Technology and a Staff Fellow and Director of Studies in Computer Science at Trinity Hall. She will deliver a keynote talk titled ‘Affective Intelligence for Human-Robot Interaction Research: Lessons Learned along the Journey’ at the AAAI Fall Symposium Series on Artificial Intelligence for Human-Robot Interaction on 19 November.

In September she gave a talk at the Royal Institution titled “Can machines be emotionally intelligent?”. It can be viewed on YouTube.

For more on her research into children’s wellbeing and robots see this University of Cambridge feature.